A Structure-Driven Approach to Generating Theory from Data

1. The Problem: When Facts Are Not Enough

Technological innovations driven by AI and the Fourth Industrial Revolution are emerging at an unprecedented pace.

Yet the markets shaped by these innovations remain unstable, and no objective data exists to reliably predict their future.

In such environments, traditional research approaches reach their limits.

Organizations that rely on “objective facts” and data-driven validation often become paralyzed when facing emerging domains where data is sparse, ambiguous, or nonexistent. Meanwhile, those willing to act under uncertainty—historically exemplified by companies such as Amazon and Google during the early internet era—can establish overwhelming advantages.

The fundamental question is:

How can we make decisions when the future cannot be derived from existing facts?

2. From Concept Research to Conceptual Investigation

In the 1990s, Kunihiro Tada introduced the idea of Concept Research to address this challenge.

The key insight was that inquiry should not be limited to observable “facts,” but must also engage with underlying “concepts” that shape perception.

Humans believe they observe objective reality.

In practice, however, perception is mediated by implicit assumptions embedded at a subconscious level. What we see is not the world itself, but a projection structured by prior concepts.

This epistemological view resonates with a wide range of thinkers—from Buddhist philosophy (Nagarjuna, Vasubandhu) to Kant, Husserl, and Jung. However, while philosophically profound, such perspectives have traditionally lacked practical implementation in business decision-making.

Concept Research was an early attempt to bridge this gap.

3. A Limitation: Human-Dependent Concept Formation

Concept Research relied on human interpretation to extract and organize concepts.

Methods such as the KJ Method and the Grounded Theory Approach (GTA) demonstrated that it is possible to derive structure and theory from data without predefined hypotheses.

However, these approaches have inherent limitations:

- They depend heavily on human cognition

- They lack reproducibility

- They are difficult to scale

- They require significant time and expertise

As a result, while concept-oriented inquiry was theoretically sound, it remained operationally constrained.

4. A Turning Point: Structure Without Assumptions

A crucial theoretical insight comes from the Ugly Duckling Theorem, which states:

From a purely logical standpoint, all objects are equally similar unless we assign importance to specific attributes.

This implies that meaning does not exist inherently in data.

Meaning emerges only when structure is imposed or discovered.

This realization leads to a fundamental shift:

The problem is not to interpret meaning first,

but to discover structure prior to meaning.

5. Conceptual Investigation: A New Paradigm

Advances in machine learning—particularly self-organizing models such as SOM, GNG, and MST—make it possible to extract structure directly from data without predefined assumptions.

At the same time, Large Language Models (LLMs) enable automated interpretation of that structure.

This combination leads to a new methodology:

Conceptual Investigation

A hypothesis-free, structure-driven approach to generating theory from data.

Unlike traditional research:

- It does not begin with hypotheses

- It does not rely on human coding

- It does not assume predefined categories

Instead, it follows a different sequence:

Data → Structure → Meaning → Theory

6. Methodological Framework

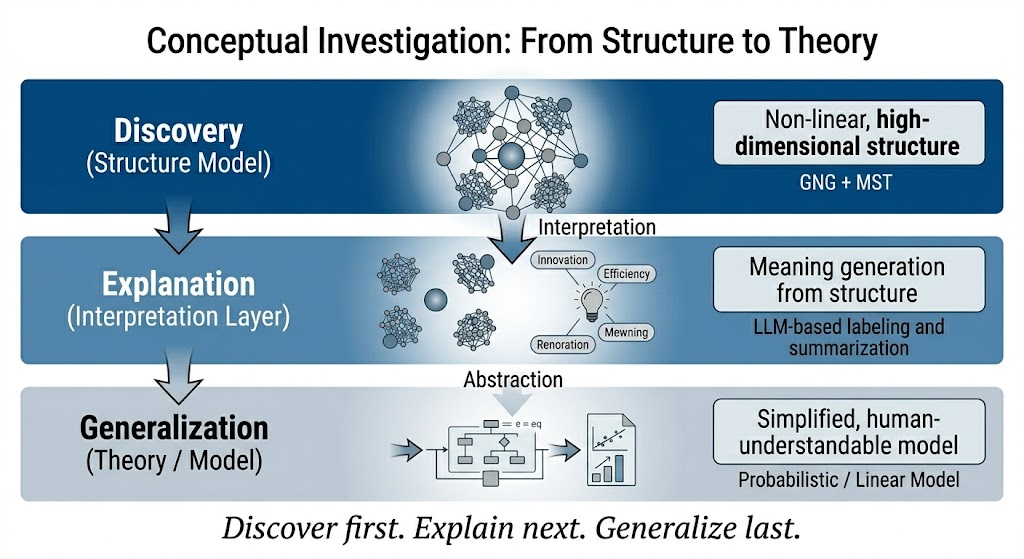

Conceptual Investigation operates through three layers:

Discovery (Structure Model)

Data is transformed into a high-dimensional structure using techniques such as GNG and MST.

This structure captures similarity, density, and relational topology without imposing prior meaning.

Explanation (Interpretation Layer)

LLMs interpret the discovered structure by assigning labels, generating summaries, and articulating relationships between clusters.

Generalization (Theory Model)

The interpreted structure is abstracted into simplified models—such as probabilistic or linear models—that support human understanding and decision-making.

Importantly, these layers are sequential:

Structure precedes meaning.

Meaning precedes theory.

7. Integration of Qualitative and Quantitative Analysis

Conceptual Investigation unifies previously separate domains:

- Qualitative methods (KJ Method, GTA)

- Quantitative models (SOM, GNG, BBN)

- Language-based reasoning (LLMs)

Text, numerical data, and even multimodal information (images, audio) can be represented in a unified vector space, allowing structural analysis across domains.

This enables:

- Digitization of qualitative reasoning

- Integration of heterogeneous data

- Simulation of future scenarios through probabilistic models

8. Implications for Decision-Making

Conceptual Investigation shifts the role of research itself.

Traditional research:

- Validates existing hypotheses

- Explains known structures

Conceptual Investigation:

- Discovers unknown structures

- Generates new hypotheses and theories

This has profound implications:

- It enables exploration of emerging markets where data is incomplete

- It reduces dependence on prior assumptions

- It accelerates strategic decision-making

9. Conclusion

Conceptual Investigation represents a fundamental shift in how knowledge is generated.

Rather than asking:

“Is this hypothesis correct?”

it asks:

“What structure exists in the data?”

From that structure, meaning and theory emerge.

Final Definition

Conceptual Investigation

Discovering structure without hypotheses,

and generating theory from that structure.

Closing Insight

Reality is inherently complex and non-linear.

Human understanding, however, requires simplification.

Conceptual Investigation bridges this gap:

It preserves the complexity of reality

while enabling the creation of understandable models.

In doing so, it redefines research itself.